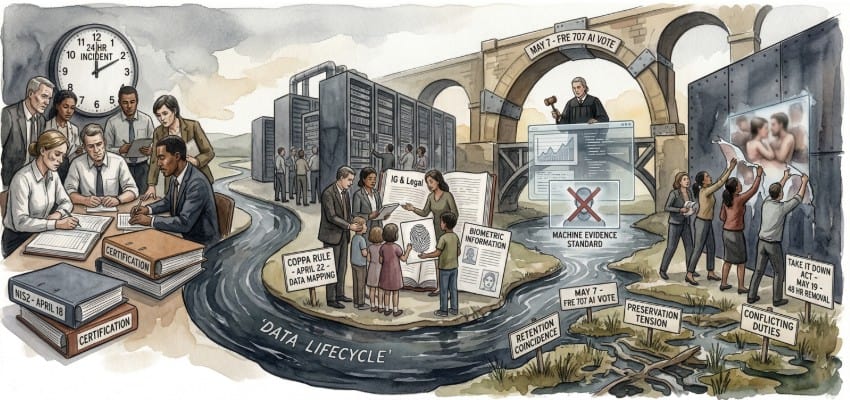

Editor’s Note: Four compliance deadlines converging in a 31-day window this spring will test the resilience of information governance frameworks across sectors. The EU’s NIS2 Directive hits its first hard enforcement checkpoint in Belgium on April 18, followed four days later by the FTC’s overhauled COPPA Rule reaching full compliance. In early May, the Advisory Committee on Evidence Rules votes on proposed FRE 707, which would set a federal admissibility standard for AI-generated evidence. And on May 19, the TAKE IT DOWN Act’s platform notice-and-removal requirements take effect.

For cybersecurity, data privacy, regulatory compliance, and eDiscovery professionals, the interaction between these mandates matters as much as any individual deadline. NIS2 demands documented incident response chains. COPPA requires defined data deletion schedules. The TAKE IT DOWN Act compels rapid content removal that may conflict with litigation preservation duties. And Rule 707 signals that AI-driven review tools will face evidentiary scrutiny. Each pulls the data lifecycle in a different direction, and compliance teams must manage all four threads at once.

Watch for early enforcement actions under NIS2 in Belgium and Germany, FTC guidance on COPPA’s biometric provisions, and the trajectory of Rule 707 as it moves through the Judicial Conference.

Content Assessment: The April–May Compliance Crunch: A Practitioner’s Calendar for eDiscovery and Information Governance

Information - 95%

Insight - 93%

Relevance - 92%

Objectivity - 92%

Authority - 91%

93%

Excellent

A short percentage-based assessment of the qualitative benefit expressed as a percentage of positive reception of the recent article from ComplexDiscovery OÜ titled, "The April–May Compliance Crunch: A Practitioner’s Calendar for eDiscovery and Information Governance."

Industry News – Data Privacy and Protection Beat

The April–May Compliance Crunch: A Practitioner’s Calendar for eDiscovery and Information Governance

ComplexDiscovery Staff

Four regulatory deadlines land within 31 days of each other this spring, and each one rewires a different segment of the data lifecycle that eDiscovery, information governance, and cybersecurity professionals manage every day.

Between mid-April and late May, practitioners face the convergence of the European Union’s NIS2 Directive enforcement milestones, the FTC’s overhauled COPPA Rule compliance deadline, the Federal Rules of Evidence Committee’s scheduled vote on proposed Rule 707 governing AI-generated evidence, and the TAKE IT DOWN Act’s platform compliance cutoff. Together, the cluster represents what may be the most concentrated stretch of compliance activity in years for professionals who sit at the intersection of cybersecurity, privacy, and litigation readiness.

No single regulatory shift here is surprising on its own — each has been widely signaled for months. What catches organizations off guard is the overlap and the fact that preparation for one mandate frequently collides with preparation for another. The same compliance officers, the same legal teams, the same budget lines are being pulled in four directions at once.

NIS2: Verification Deadlines Hit as Enforcement Ramps Up (April 18)

Belgium’s transposition of the NIS2 Directive, finalized in February 2026, set April 18 as the deadline for essential and important entities to submit verified compliance assessments — either, for entities using Belgium’s CyberFundamentals (CyFun®) framework, a Basic or Important level verification by an accredited conformity assessment body, or an ISO 27001 information security policy with an appropriate scope and statement of applicability, as permitted by Belgian guidance. The date marks one of the first major enforcement checkpoints for NIS2 in a member state that has substantially operationalized its compliance framework.

Belgium is not alone in pressing forward. Germany’s NIS2 implementation act entered into force on December 6, 2025, and the BSI registration portal opened on January 6, 2026, with a registration deadline of March 6 for essential and important entities — a deadline that has already passed, bringing roughly 29,500 entities under BSI supervision compared to 4,500 previously, according to Reed Smith’s analysis of the German implementation. Italy and Denmark completed transposition earlier, and enforcement authorities across these jurisdictions are moving from rulemaking into active oversight. The broader EU picture remains uneven. Multiple member states had still not completed transposition by late 2025, drawing public rebukes from the European Commission.

The penalty structure demands attention from any organization with European operations. Essential entities face fines of up to 10 million euros or 2 percent of global annual revenue, whichever is higher, according to the directive’s text. Important entities face a ceiling of 7 million euros or 1.4 percent of global revenue. Repeat offenses within three years trigger doubled fines.

For eDiscovery and information governance teams, the operational bite extends well beyond registration and verification. The directive mandates a 24-hour early warning for cybersecurity incidents, a 72-hour detailed report, and a one-month final remediation report — a cadence established under Article 23. That incident reporting timeline directly shapes evidence preservation protocols and litigation hold triggers. Organizations subject to NIS2 should review whether their document retention policies capture the version control, timestamps, and author attribution records that Articles 31 and 32 require.

CISOs and security operations teams face a parallel concern: the directive’s risk management requirements under Article 21 demand documented cybersecurity measures covering incident handling, business continuity, supply chain security, and encryption policies. When those documented measures become the subject of regulatory inquiry or litigation, the IG team’s ability to locate, preserve, and produce them on short timelines determines whether the organization can demonstrate compliance — or gets caught flat-footed.

Practitioners working for U.S.-based companies with EU subsidiaries or clients face a practical coordination challenge: aligning NIS2’s incident reporting cadence with existing U.S. breach notification regimes without creating conflicting preservation obligations or inadvertent spoliation risks.

COPPA’s First Overhaul in Over a Decade (April 22)

Four days after Belgium’s NIS2 verification deadline, the FTC’s amended Children’s Online Privacy Protection Act Rule reaches its compliance date. The FTC finalized the amendments on January 16, 2025, published them in the Federal Register on April 22, 2025, and gave regulated entities one year — until April 22, 2026 — to bring operations into compliance. It is the first substantial update to the COPPA Rule since 2013, and it arrives at a moment when children’s data intersects with AI, biometrics, and cross-platform tracking in ways the original rule never anticipated.

The definitional expansion is where the eDiscovery implications surface most sharply. “Personal information” under the amended rule now includes biometric identifiers: fingerprints, handprints, retina and iris patterns, genetic data, voiceprints, gait patterns, facial templates, and faceprints. It also covers government-issued identifiers such as state ID numbers, birth certificate numbers, and passport numbers, according to the FTC’s final rule. For any organization that collects data from children under 13 — or that processes data that might include children’s data as part of a larger dataset — the scope of what must be identified, preserved, and produced in response to a legal hold or discovery request has expanded substantially.

The new rule requires operators to obtain separate verifiable parental consent before disclosing children’s personal information for purposes that are not “integral” to the operator’s website or service. That distinction between integral and non-integral use introduces a classification exercise that information governance teams will need to embed in their data maps. When litigation arises, the question of whether a particular data use was integral will become a factual dispute, and the underlying records — consent logs, purpose-limitation documentation, data-sharing agreements — will be discoverable.

Perhaps the most consequential change for IG professionals is the mandate for a written data retention policy specifically addressing children’s personal information. The FTC has stated that indefinite retention will rarely, if ever, satisfy the rule’s reasonableness standard. Organizations must define what children’s data they collect, why they collect it, and when they will delete it. That written policy becomes both a compliance artifact and a potential exhibit in any enforcement action or civil litigation.

Security teams take note: operators must also establish a written information security program proportionate to the sensitivity of the data collected. For CISOs at companies operating child-directed services — or services with mixed-age audiences — this requirement creates a new documentation obligation that will be subject to discovery in any FTC investigation or private litigation. For organizations already subject to state biometric privacy laws like Illinois’s Biometric Information Privacy Act (BIPA), the COPPA amendments create a layered compliance environment where federal and state obligations interact in ways that litigation teams must map carefully.

FRE 707: The Vote That Could Reshape AI Evidence Standards (May 7)

Two weeks after COPPA’s deadline, the Advisory Committee on Evidence Rules is currently scheduled to vote on proposed Federal Rule of Evidence 707, a provision that would for the first time establish a federal standard for the admissibility of machine-generated evidence. The Judicial Conference’s Committee on Rules of Practice and Procedure approved the draft rule on June 10, 2025, and the Advisory Committee voted eight-to-one to release it for public comment. That comment period closed on February 16, 2026. A May 7 committee vote is the next gate in a process that, if the rule advances, would send it through the full Judicial Conference, the Supreme Court, and congressional review under the Rules Enabling Act before taking effect. Even on an affirmative May 7 vote, the rule would still face several stages of review before it could take effect, likely placing any effective date at least a year away.

Rule 707 addresses a gap that litigators and eDiscovery practitioners have been navigating without a roadmap: when AI or machine-learning systems produce outputs offered as evidence — predictive analytics in patent disputes, algorithm-driven risk scores in employment cases, automated contract analysis in commercial litigation — what reliability standard applies? Under the proposed rule, such outputs would be held to the same criteria as expert testimony under Rule 702. The proponent would need to demonstrate that the AI output rests on sufficient facts or data, that it was produced through reliable principles and methods, and that those methods were applied reliably to the case’s facts.

The eDiscovery ramifications extend well beyond the courtroom. If Rule 707 advances, parties using technology-assisted review, predictive coding, or AI-driven document classification in the discovery process will face heightened scrutiny over their tools’ methodologies. The rule’s drafters have signaled coordination with the Civil Rules Committee on disclosure requirements around source code and trade secrets — a development that could force producing parties to reveal proprietary details about their review platforms. Courts would also examine whether training data are representative enough to produce accurate outcomes for the population relevant to the case, and whether opposing parties and independent researchers have had access to test the system’s performance.

Cybersecurity forensic tools face analogous exposure. Network intrusion detection systems, malware analysis platforms, and digital forensic imaging software increasingly incorporate machine-learning components. If Rule 707 establishes that machine-generated outputs must meet Rule 702 reliability standards, defense counsel in cybercrime and data breach cases will have a new basis to challenge the admissibility of forensic evidence generated by these systems. Security teams that deploy AI-driven threat detection should begin cataloging the validation histories and error rates of those tools — documentation that will be essential if the outputs are later offered as evidence.

Practitioners should track how Rule 707 interacts with the growing number of state courts already grappling with AI evidence. Texas, California, and several other states have issued local rules or standing orders addressing AI-generated filings. A federal rule would not preempt state evidence codes, but it would establish a benchmark that state rulemakers — and opposing counsel — will reference.

Even if the May 7 vote is only one step in a multi-year rulemaking process, the direction of travel is clear. Organizations that rely on AI-assisted litigation tools should begin documenting the validation, training data provenance, and error rates of those systems now, rather than scrambling to reconstruct that evidence trail after the rule takes effect.

TAKE IT DOWN Act: Platform Compliance and the New Content Preservation Calculus (May 19)

The final deadline in this spring’s cluster arrives on May 19, when the TAKE IT DOWN Act’s platform compliance requirements take effect. Signed into law by President Trump on May 19, 2025, the Act — formally the Tools to Address Known Exploitation by Immobilizing Technological Deepfakes on Websites and Networks Act — criminalizes the nonconsensual publication of intimate images, including AI-generated deepfakes, and requires covered platforms to establish a notice-and-removal process. The criminal provisions, carrying penalties of up to three years of imprisonment, took effect immediately upon signing. Platforms have had one year to build the required takedown infrastructure.

The operational mechanics are specific: upon receiving a valid removal request, a covered platform must take down the identified content within 48 hours and make “reasonable efforts” to identify and remove identical copies, according to the statute’s text. The Act defines covered platforms using the same “interactive computer service” framework from Section 230 of the Communications Decency Act, encompassing any website, online service, or application that primarily provides a forum for user-generated content.

For eDiscovery professionals, the 48-hour clock creates a tension that did not previously exist at the federal level. Content subject to a takedown request may also be relevant to pending or anticipated litigation — harassment claims, deepfake-related defamation suits, or criminal prosecutions. The Act, as currently drafted, does not include an explicit litigation hold exception, creating potential friction between takedown duties and preservation obligations. Absent carefully designed preservation workflows, platforms that remove content to comply with the removal mandate risk inadvertently eliminating evidence that parties later seek in related legal proceedings.

Information governance teams at platforms and the outside counsel who advise them need to assess how their content moderation workflows interact with legal hold processes. A 48-hour removal window leaves little room for the deliberate legal review that preservation obligations typically require. Security and trust-and-safety teams, which typically manage content detection and removal operations, will need to coordinate with legal on a case-by-case triage process that did not exist before this statute.

The Act’s enforcement mechanism runs through the FTC, which treats noncompliance with the notice-and-removal process as a violation of an FTC rule under Section 18 of the FTC Act. Civil penalties currently stand at up to $53,088 per violation, according to the FTC’s current penalty schedule. That per-violation structure could produce substantial aggregate exposure for platforms with high volumes of user-generated content.

Constitutional challenges remain a live possibility. The Electronic Frontier Foundation and the Center for Democracy & Technology have raised concerns about the law’s breadth, arguing that its language could be used to compel removal of lawful speech. Because the Act regulates content based on its nature — sexually explicit depictions — it could face strict scrutiny under the First Amendment if challenged. Courts in multiple states — including Illinois, Indiana, Minnesota, Nebraska, Texas, and Vermont — have upheld analogous state laws against free-speech challenges, according to a Congressional Research Service analysis. But no federal court has yet tested the TAKE IT DOWN Act’s framework, and the absence of narrow tailoring in the notice-and-removal process remains an open legal question. Given the rapidly evolving jurisprudence on deepfake and nonconsensual-image laws, early federal test cases could reshape how the Act is applied in practice.

Where the Deadlines Intersect — and Why Staffing Matters

The operational difficulty of this spring’s compliance cluster lies not in any single mandate but in the way they interact. A multinational technology company that operates a user-generated content platform, collects data from users who may include children, maintains EU operations, and uses AI tools in its litigation support workflow could face compliance obligations under all four regimes within a single month.

Consider the data preservation implications alone. NIS2 demands a documented evidence chain for cybersecurity incidents with strict reporting timelines. COPPA now requires written retention policies with defined deletion schedules for children’s data. The TAKE IT DOWN Act mandates rapid content removal that could conflict with preservation duties. And if Rule 707 advances, the evidentiary foundations of AI-assisted review tools themselves will be subject to challenge. Each regime pulls the data lifecycle in a different direction — retain, delete, remove, document — and the IG professional’s job is to hold all four threads simultaneously without letting any of them snap.

The human reality behind the regulatory calendar is worth acknowledging. At most organizations, the compliance officer who manages the COPPA data mapping exercise is the same person helping the security team document NIS2 incident response procedures, the same person reviewing preservation protocols for TAKE IT DOWN requests, and the same person fielding questions from litigation counsel about AI tool validation in light of Rule 707. Budget and headcount were planned before this four-deadline pileup became visible, and reallocating resources mid-quarter is rarely simple. Organizations that have not already begun cross-functional coordination between privacy, security, litigation support, and records management will find April an expensive month to start.

For practitioners building or updating their compliance calendars, the reference points are: April 18 for NIS2 verification in Belgium (with Germany’s BSI registration already past due), April 22 for COPPA’s full compliance deadline, May 7 for the FRE 707 Advisory Committee vote, and May 19 for the TAKE IT DOWN Act’s platform compliance cutoff. The question that matters most is not whether any single deadline will be met — it is whether the organization’s governance framework can absorb all four without fracturing.

What is your organization doing to prepare for the convergence of these four regulatory deadlines — and which one keeps you up at night?

News Sources

- The NIS2 Law (Centre for Cybersecurity Belgium)

- Germany Implements NIS2: Immediate Effect, Broad Scope, Near-Term Registration (Reed Smith)

- Children’s Online Privacy Protection Rule (Federal Register)

- Unpacking the FTC’s COPPA Amendments: What You Need to Know (White & Case)

- AI in the Courtroom: How Proposed Rule 707 Could Shape Evidence Standards (Steptoe)

- Safeguarding the Courtroom from AI-Generated Evidence: Federal Rule of Evidence 707 Approved by Judicial Conference (Nelson Mullins)

- Take It Down Act Requires Online Platforms To Remove Unauthorized Intimate Images and Deepfakes When Notified (Skadden)

- The TAKE IT DOWN Act: A Federal Law Prohibiting the Nonconsensual Publication of Intimate Images (Congressional Research Service)

- The TAKE IT DOWN Act’s 48-Hour Deadline: What Does It Mean When Section 230 Still Shields Platforms? (University of Baltimore Law Review)

- NIS2 Directive Transposition Tracker (European Cyber Security Organisation)

Assisted by GAI and LLM Technologies

Additional Reading

- The AI Sanction Wave: $145K in Q1 Penalties Signals Courts Have Lost Patience with GenAI Filing Failures

- FTC’s OkCupid Action Reframes AI Training Data as a Consumer Protection Issue

- White House AI Framework Signals New Compliance Stakes for Legal, Cybersecurity, and eDiscovery

- The Gatekeeper’s Key: How the Conformity Assessment Unlocks the EU AI Market

- From Press Release to Data Layer: Scaling Brand Authority in the AI Era

- How Prompt Marketing Is Redefining Thought Leadership In The AI Era

- Raising The Age Ceiling: How AI Is Extending Executive Leadership

- Staying Curious: One Practical Defense Against Creative Burnout

- From Longbows To AI: Lessons In Embracing Technology

- 20 Ways Creative Professionals Battle Burnout And Find Fresh Ideas

- 14 Points For Brands To Consider Before Making Sociopolitical Statements

Source: ComplexDiscovery OÜ

ComplexDiscovery’s mission is to enable clarity for complex decisions by providing independent, data‑driven reporting, research, and commentary that make digital risk, legal technology, and regulatory change more legible for practitioners, policymakers, and business leaders.