Editor’s Note: FutureLaw 2026’s Day One morning argued for who should govern AI. The mid-day and early-afternoon program made the messier case for who should build with it. Six sessions covered here — a trust panel, a legal-ops panel, a satirical keynote on surveillance, a Harvey-led keynote on pilot economics, an infrastructure panel, and an education panel — converged on a single procedural posture: invest in foundations before features. For cybersecurity, data privacy, regulatory compliance, and eDiscovery professionals, the architecture-and-observability emphasis lands where the work actually happens. AI agents as a new infrastructure actor, audit trails as a prerequisite for trust, and the SALI legal data standard as cross-border metadata infrastructure all map directly to the concerns of ComplexDiscovery readers.

ComplexDiscovery OÜ is on site in Tallinn, covering FutureLaw 2026 with practitioner-focused reporting and post-event analysis for cybersecurity, privacy, regulatory compliance, and eDiscovery professionals.

Content Assessment: FutureLaw 2026 Day One, after lunch: from regulating AI to building with it

Information - 92%

Insight - 91%

Relevance - 90%

Objectivity - 92%

Authority - 92%

91%

Excellent

A short percentage-based assessment of the qualitative benefit expressed as a percentage of positive reception of the recent article from ComplexDiscovery OÜ titled, "FutureLaw 2026 Day One, after lunch: from regulating AI to building with it."

Industry News – Artificial Intelligence Beat

FutureLaw 2026 Day One, after lunch: from regulating AI to building with it

ComplexDiscovery Staff

If the morning of Day One at FutureLaw 2026 asked who governs the governors, the mid-day and early-afternoon sessions asked a harder question: who builds, and on what foundations? Across four panels — on full-stack trust, legal operations, legal infrastructure and legal education — and two keynotes — a cautionary one on surveillance and a pilot-economics one from Harvey — the program in Tallinn moved from the politics of AI to the construction of it.

Trust as a full-stack problem

The first post-coffee panel — “Full-Stack Trust: From Infrastructure to Interface” — was moderated by Laura Kask, CEO at Proud Engineers and a former Chief Legal Officer for the Estonian government’s CIO, who has spent a career building digital-state infrastructure. She invoked former Estonian President Toomas Hendrik Ilves’s line that “you can’t bribe a computer,” then quickly noted that trusting machines takes work the slogan does not capture. “It’s a mixture of technology, mixture of legal framework, but also organizational matters,” she said.

Marek Laskowski, CIO at the Polish firm DZP, framed AI agents not as a feature but as a new actor inside a law firm’s infrastructure. “When we have a new actor in our environments, we have a new risk,” he said. “People user doesn’t equal AI agents. We have to focusing on how preparing the infrastructure strictly dedicated to agent activity.” His advice for managing partners under the EU’s NIS2 directive and DORA: verify third-party landscapes against architecture diagrams, not declarations. “Every our providers, AI providers declare that models never teach our data, never use our data to learn,” he said. “Okay, I trust that is it, but who check it? Is it real or not real?”

Jamie Tso, founder of LegalQuants, argued the trust problem is largely an interface problem — that a tool’s traceability back to source text, paragraph by paragraph, is what produces lawyer confidence. Damien Riehl, co-host of the conference and a solutions champion at Clio, put the contrarian view directly: he now trusts Claude Code over many of the engineers he has worked with. “There’s arguably only one coder in the world that’s better than the AI,” he said, asking the room how soon the same flip would happen for lawyers. He closed the panel with a frame the room repeated for the rest of the day — the shift from humans “in the loop” to humans “on the loop,” watching the reasoning rather than approving each step.

The legal ops air-traffic controllers

The next panel — “Legal Ops 2.0: Automation, Analytics & Agile Legal Departments” — was moderated by Max Hubner, founder and managing director of The Change Lawyer. Ieva Melke, executive management board member and head of legal affairs at TV3 Group Latvia, captured the in-house mood in one image. “We feel like air traffic controllers every day,” she said. “There’s 100 planes landing, 80 planes departing, but the airport asks us to take 30 more planes the same day and also a helicopter, which we have no clue how to launch.” A survey she ran across three Baltic legal teams returned a trust score of 6.6 out of 10 for AI output, and three repeating frustrations: too many tools, IT departments restricting which ones can be used, and hallucinations — including AI tools answering in Russian when prompted in Latvian or Estonian.

Mori Kabiri, founder of Legal Operations KPIs, urged the room to start with the destination rather than the technology. “If one doesn’t know to which port one is sailing, the wind is not favorable,” he said, paraphrasing a line often attributed to Seneca and applying it to legal operations. Kabiri introduced it as a “famous answer” but did not name the source on stage. The mistake, he argued, is leading vendor conversations from feature sets rather than from a defined business outcome with a baseline KPI. Viktor von Essen, founder and CEO at the German legal-tech company Libra, argued legal departments should reposition themselves from cost centers to revenue generators by accelerating sales cycles and internalizing work that used to go to external counsel.

Riehl, returning at the close, delivered the panel’s sharpest data point — an unnamed Fortune 20 company has built 150 internal workflows it used to outsource to law firms, with the CEO instructing employees: “Do not talk to an in-house lawyer unless absolutely necessary. If necessary, talk to an in-house lawyer, but almost under no circumstances do you go to an external lawyer.” He framed it as a warning. “This is not the future, this is today,” he said. “The future is here. It’s just not evenly distributed.”

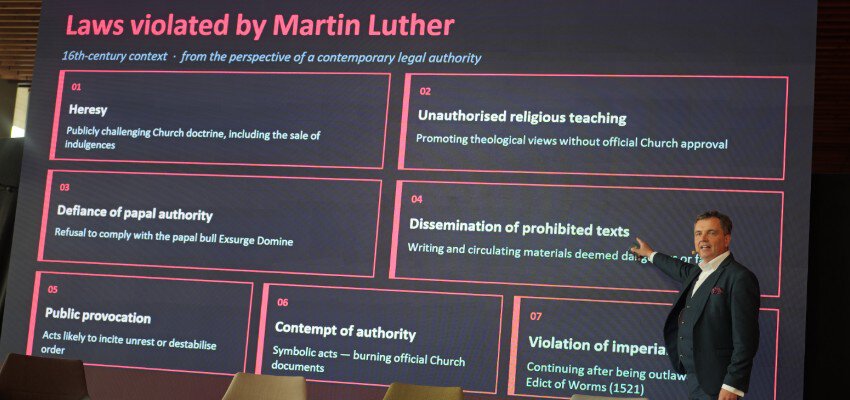

A cautionary keynote: what if Martin Luther had met modern surveillance?

The afternoon’s most performative session belonged to Dr. Carri Ginter, a Sorainen partner and Jean Monnet Professor of EU Law at the University of Tartu, who teamed with Regor Fotso, an Estonian engineer at Helvora and Swedbank, to demonstrate a hypothetical police AI they called OnlyWatch. “Last year I spoke about privacy,” Ginter said. “Estonia canceled some of the projects. We haven’t introduced the AI on the top of the public cameras.” The pair then ran the system against history. Their thought experiment: had a 16th-century church had access to drones, voice prints, behavioral databases and metadata, it would have flagged Martin Luther as a 99% probable lawbreaker before he reached the Wittenberg church door. “AI…could have avoided Protestant reformation,” Ginter said.

The fallout, the system projected: more monarchies in Europe, suppressed scientific revolution, lower literacy, and a Bible never translated into German. The argument cut against the morning’s tone of pragmatic adoption. “Historically, civic society has been developing by challenging rules,” Ginter said. “We critically need to leave the space so that we can keep breaking the law.” Riehl, hosting, accepted the point. “If you’re going to take one thing away from this conference, break the law,” he said.

Joe Cohen and Jevons paradox

Joe Cohen, a legal innovation partner at Harvey and the former director of advanced client solutions at Charles Russell Speechlys, used his keynote — “The Reality of Legal Intelligence: Moving from AI Hype to Core Infrastructure” — to lay out a pilot-design framework for generative AI deployment. Pilots should run two to 12 weeks, include practice-area and seniority-balanced cohorts, and capture data granular enough to distinguish short-form drafting from long-form drafting and template-based drafting from prompt-based drafting. “If you’re just capturing high level, how good is it for drafting, I don’t think we’re doing well enough,” he said.

Cohen’s broader argument cut against an easy assumption — that AI productivity gains would show up as law firm revenue contraction. The data, he said, does not yet show it. United States survey data from approximately six months ago indicated 90% of legal work is still billed hourly, AI usage is heavy at adopting firms, and yet 2025 financial reporting from large firms shows revenue and profit-per-equity-partner growth in the high single digits. “What’s going on here?” Cohen asked. His read: a Jevons paradox dynamic, in which a service getting cheaper and faster increases demand for it. “Clients are buying more legal services,” he said. The pricing implication, he argued, is a decoupling of time and value — and an opportunity for firms to charge for the speed itself, or to package legal expertise as software.

From code to clause — the infrastructure debate

The next panel — “From Code to Clause: How AI Is Rewriting Legal Infrastructure” — was moderated by Malin Männikkö, product lead at Newcode.ai. Tso, returning to the stage, opened with a deceptively simple thesis: “Lawyers only have one job, which is to make clients happy.” An ideal AI-era workflow, he said, puts the client, the lawyer and the AI in one electronic space rather than burying the AI’s contribution behind a polished memo. Dr. Nils Feuerhelm, VP Legal Operations at Noxtua, argued that the bigger infrastructure problem is the document itself. “We still send PDFs,” he said, and law firms upload them into AI tools that re-extract the structured data the lawyer just stripped out. “AI could be something that helps driving the change, but it’s not the solution for the change.”

Aidas Kavalis, founder of the Lithuanian legal-tech company Amberlo (now part of Septia Group), said most firms are still document-first in their workflows, but the shift to data-backbone infrastructure is happening — slowly. The panel converged on a familiar absence: a top-down legal data standard. Riehl, fielding the audience question on cross-border standardization, pointed to SALI — the Standards Advancement for the Legal Industry — which he said maintains roughly 18,000 tags as of early 2026, spanning practice areas, agreement types and document features, with cross-language unique identifiers. “There are many ways to express that linguistically,” Riehl said. “All of those merger agreements in all of those languages have a single unique identifier that you can now be able to use cross border.”

Beyond the lecture: the education problem

The final session covered in this report — “Beyond the Lecture: Cultivating Global Legal Minds Through Cognitive Diversity” — was moderated by Archil Chochia, director of the Department of Law at TalTech Law School, with Christina Blacklaws (former president of the Law Society of England and Wales and chair of LawtechUK), Pınar Çağlayan Aksoy (associate professor of civil law at Bilkent University, Turkey) and Paul James Cardwell (professor of law and vice dean for education at King’s College London). Blacklaws opened with the diagnosis. “Legal education is facing an inflection point,” she said. “The traditional model of legal education is no longer enough.” Her case: every matter now has an international dimension, AI demands a shift from teaching students what to think to how to think, and the profession has only just begun valuing cognitive diversity in its hiring.

Aksoy contrasted the entry standards of two decades ago — legal knowledge, language skills, a good grade-point average — with the demands now placed on a 2026 graduate, who must reason across hybrid legal systems, transnational arbitration, AI-assisted practice and digital infrastructure. “They should be bilingual in a deeper sense,” she said — “fluent in legal doctrine and fluent in legal translation across systems, institutions, technologies and cultures.” Cardwell, drawing on King’s College’s perspective, played the candid skeptic about whether universities can move at the pace required. “We need a working group, then we need a committee, then we need another working group, then we ditch it, then we have a relaunch,” he said. National bar regulation, faculty risk-aversion, large cohorts that depend on lecture-format teaching, and the recent return to closed-book exams in response to AI all push against the kind of curriculum shift the panel advocated.

What Day One built

Across the mid-day and early-afternoon stretch, speakers returned to the same insistence. Trust required architecture diagrams, observability, and audit trails — not belief. Legal operations required destinations before tools. Enforcement required friction, not only efficiency. AI pilots required granular data to be worth running. Legal infrastructure required standards to clear the document fog. Legal education required both a different curriculum and the institutional courage to deliver it.

The professional question these sessions leave on the table is structural. Building any of this requires roles the profession has not historically rewarded — legal engineers, prompt curators, data taxonomists, operations leads with KPI literacy. Who pays for that work, who supervises it, and who gets credit for it when the bill arrives — those are questions Day One’s late-afternoon program and Day Two inherit.

If your firm or department had to start one of these builds tomorrow, would you know which port you were sailing for?

News Sources

- FutureLaw 2026 Speakers (futurelaw.ee)

- Laura Kask — CEO at Proud Engineers (futurelaw.ee)

- Max Hubner — The Change Lawyer (futurelaw.ee)

- Dr Carri Ginter — Sorainen / University of Tartu (futurelaw.ee)

- Joe Cohen — Legal Innovation Partner at Harvey (futurelaw.ee)

- Malin Männikkö — Product Lead at Newcode.ai (futurelaw.ee)

- Archil Chochia — Director, TalTech Law School (futurelaw.ee)

- Ieva Melke — TV3 Group Latvia (futurelaw.ee)

Assisted by GAI and LLM Technologies

Additional Reading

- FutureLaw 2026 opens in Tallinn with a sharp question: who governs the governors?

- FutureLaw 2026 Heads to Tallinn: Where Legal Innovation Meets One of Europe’s Most Captivating Capitals

- FutureLaw 2026 Preview: The Practical Path to Defensible AI in Legal Workflows

- The 2026 Event Horizon: Early Outlook for eDiscovery, AI, and European Innovation

- Data Provenance and Defense Tech: IG Lessons from Slush 2025

- Lessons from Slush 2025: How Harvey Is Scaling Domain-Specific AI for Legal and Beyond

- Kaja Kallas Warns of Democracy’s Algorithmic Drift at Tallinn Digital Summit

- The Agentic State: A Global Framework for Secure and Accountable AI-Powered Government

- When Founders Have Red Lines: Investing Beyond ROI at Latitude59

- At Latitude59, Estonia Challenges Europe: Innovate Boldly or Be Left Behind

Source: ComplexDiscovery OÜ

ComplexDiscovery’s mission is to enable clarity for complex decisions by providing independent, data‑driven reporting, research, and commentary that make digital risk, legal technology, and regulatory change more legible for practitioners, policymakers, and business leaders.